This is my first post (actually second, but it is only translation of first) where I’d like to introduce a theoretic basis of Documents Clustering or Clustering in general which topic is close to me recently.

What does Document Clustering is about?

So, to explain Document Clustering it is good idea to define what is Clustering in general before.

Human is a being that likes to join things like objects, facts persons into groups or categories (here let’s call their clusters) to better or easier understand a world around. Let’s get people as an example. Imagine that you go the street and describe people you pass by sentences like: old man, another old man, nice girl, not very nice girl etc. We could describe them by their age, height, hair colour, style of wearing etc. In short, we could find many informations their would be categorised by.

So, what is this Clustering?

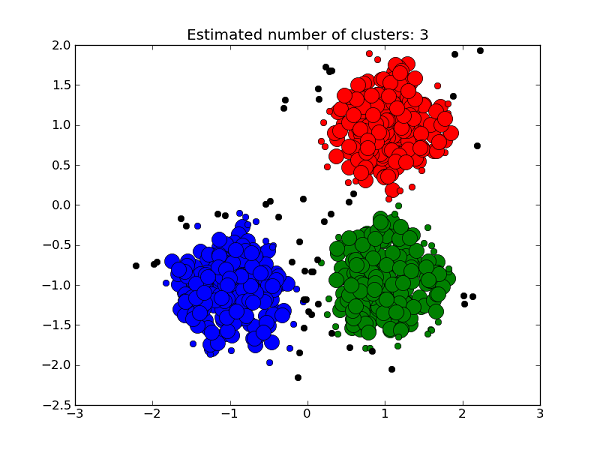

By definition we could say, clustering is a process of assignment of objects into groups where object in group is more similar to objects in this group than to all other groups. Result of this process are object of upper class (of abstraction), groups in which objects should be homogeneous or at least similar to each others.

What does that definition tell us?

So, having a set that contais some undefined strictly number of people we want to cluster, result should be groups where in range of group people would be related by some attribute that was used to describe them. Did we describe people by their hobbys, here we are, the result going to be groups related to same or similar hobby.

How does it look in practice?

Clustering is operation on a points.

Exacly. Points are grouped by distance between them and that’s how they are assigned to clusters. In most cases there is estimated a center point of cluster, centroid and then points are assigning to the nearest centroids.

Coming back to our example with people. How could we „clusterize” people if clustering algorithms work on points, numbers?

The way to do that is called vectorization. It is a proccess that converts more abstract objects like documents (or people as in example) into form of numbers. Products of this process, vectors are matrices with one column (or row if matrix is transformed). Each element of vector is related to one property’s numeric representation. Value of element means a weight, a extent of related attribute with object.

Let’s say we want to describe some group of people with wectors. We get 3 properties describing them, the easiest properties to vectorize would be: age, height and weight as they are numbers.

So, vector looks like:

[age, height, weight]

For example: [18, 182, 85], [25, 164, 58], [35, 170, 89], [53, 179, 88].

We’ve got a point in 3D space that describes people by mentioned attributes then it should not be a problem to create groups with similarities of age, height or weight. Obviously 4 points are not enough to do this effectively, so we need it more.

But wait.

As we know, clustering bases on distances. Having unregular data like that, I mean, influence of height or weight would be much bigger than influence of age on distribution of points, distances between them. Good idea would be weighting of values. For instance a value of element would be a quotient of some maximum value or value’s probability from normal distribution.

How does it work with documents?

It is very similar way to clustering documents that present as example before. Documents are converted into vectors by weight of word inside, calculated from analysis whole set of documents. Next, the multidimensional points are assigned to clusters by distances from each others. Description of algorithms to create vectors from text and to clustering are topics for another post.

Cool article